How are API rate limits set and implemented?

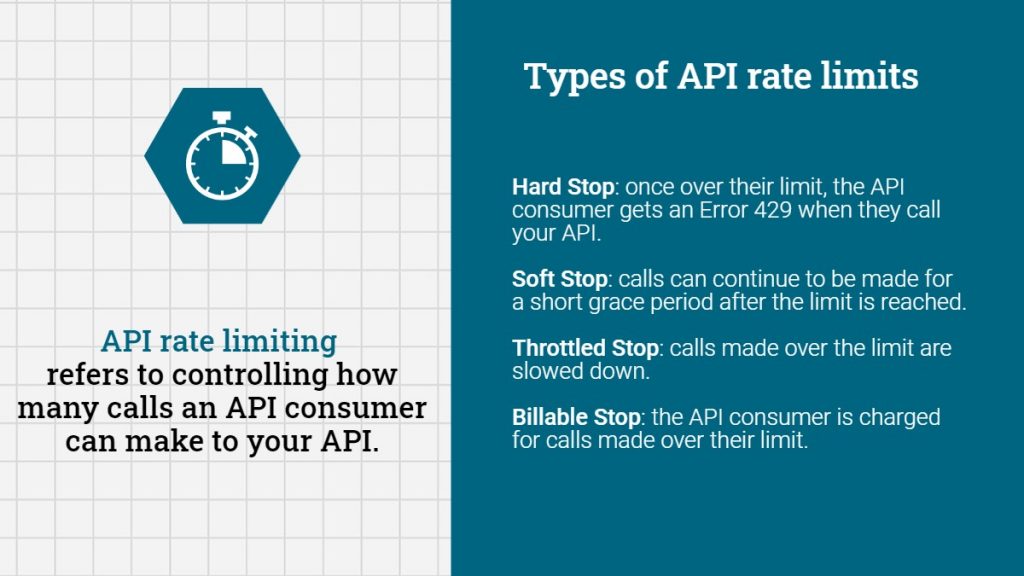

API rate limiting refers to controlling or managing how many requests or calls an API consumer can make to your API. You may have experienced something related as a consumer with errors about “too many connections” or something similar when you are visiting a website or using an app.

An API owner will include a limit on the number of requests or amount of total data a client can consume. This limit is described as an API rate limit. An example of an API rate limit could be the total number of API calls per month or a set metric of calls or requests during another period of time.

API rate limit Frequently Asked Questions

Below are supporting details related to API rate limiting along with answers to frequently asked questions about this topic.

What is an API consumer?

An API consumer, at the most basic level, could be a mobile app, a website, or even your doorbell or thermostat! Anything that makes a call to an API to get data is an API consumer. These are made via API requests.

What is an API request?

An API request (or call) is where one of these consumers, say your thermostat, requests information from “the cloud” (or servers on the internet).

Say your thermostat wants to find out what the current weather conditions are so that it can display those for you. That would be an API request.

The largest number of API requests, by far, come from mobile apps. A single mobile app might make between 10-20 API requests, just by you opening an app and logging in!

How are API rate limits typically set?

API rate limits are typically set up to limit the number of requests either by:

- per second

- per minute

- per hour

- per day (or 24-hour periods)

- per month

An API is not limited to picking just one of these. You could have one API rate limit per second and a different API rate limit per-hour. One or more API rate limits can be active at the same time.

API rate limiting might even be implemented differently depending on if you are authenticated or not. Authenticated API users (or API consumers that have included their credentials in the API Call) might be allowed more requests than anonymous users.

Do I need to implement API rate limiting?

Most likely, yes. But it’s important to understand the reasons why you might need them. If you are going to measure the success (or failure) of rate limits, you have to have a clear and defined purpose.

For example, managing the volume and rate at which transactions come into your applications can protect against DDoS (Distributed Denial of Service) attacks and other issues that would impact server performance or availability and degrade the end user experience.

To do this, rate limits should be implemented to protect against specific clients or users, as well as globally to cover the overall traffic allowed across all clients. Individual APIs or operations may need custom rate limits to account for specific business impact, ability to scale, or infrastructure/resource costs.

However, rate limiting is only one step in protecting APIs against DDoS attacks and you can’t rely exclusively on rate limits to avoid them.

Discover API Security Tools and Best Practices

What are my options for implementing API rate limits?

- Hard Stop: This means an API consumer will get an Error 429 when they call your API if they are over their limit.

- Soft Stop: In this case, you might have a grace period where calls can continue to be made for a short period after the limit is reached.

- Throttled Stop: You might just want to enforce a slowdown on calls made over the limit. This way users can continue to make calls, but they will just be slower because they are over the limit.

- Billable Stop: Here you might just charge the API consumer for calls made over their limit. Obviously, this would only work for authenticated API users but can be a valid solution.

API rate limits minimize backend costs and improve user experience

API rate limiting can limit backend expenses and reduce costs. For example, say there is a line of thermostats that malfunction and go into a loop where they are calling your weather API over and over. While this might not take down your API, it will increase operational costs. By limiting those backed server calls, you can protect resources.

Getting a lot of API calls could mean that your backend servers can’t keep up properly. This could lead to bottlenecks and the equivalent of driving on the highway during rush hour traffic, negatively impacting user experience.

This could slow everyone down as requests are queued up. Slow API calls could mean things like really slow web pages and very unhappy users. By using API rate limits, you can proactively prevent slowdowns like this and keep customer experiences positive.

💡 Universal API management: what is it and why should I care?