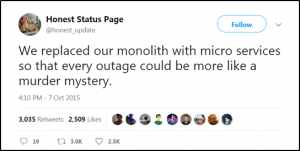

When things break in a microservice architecture, determining the root cause is challenging- where did it fail and why? There are so many things that could go wrong, there are many places to look. When errors occur, performing root cause analysis in a microservice environment has tongue-in-cheek been referred to as “solving a murder mystery.”

Imagine that you have all your microservices running in production, and suddenly you are awoken by a frantic voice, “All of our orders are being rejected and no one knows why!”. For you, now it’s time to roll out of bed, grab your pipe and magnifying glass because now you’re about to start playing detective.

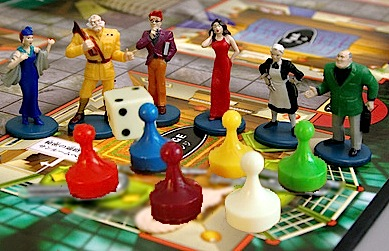

Imagine that you’re playing a game of Cluedo (or Clue as it’s known in North America). Cluedo/Clue is a murder mystery game. The object of the game is to determine who murdered the game’s victim “Dr. Black”/”Mr. Boddy”, where the crime took place, and which weapon was used. You win the game by solving the question of who, where, and how?

In Clue, the following items are at play to determine the solution:

Where: 9 rooms (Kitchen, Ballroom, Conservatory, Dining Room, Billiard Room, Library, Lounge, Hall, Study)

How: 6 weapons (candlestick, knife, lead pipe, revolver, rope, and spanner/wrench)

Who: 10 suspects (the original six Colonel Mustard, Miss Scarlet, Mr. Green, Mrs. Peacock, Mrs. White, Professor Plum plus the new suspects M. Brunette, Madam Rose, Sgt. Gray, and Miss Peach).

So, 9 rooms, 6 weapons, and 10 suspects would be: 9 * 6 * 10 = 540. Therefore, there are 540 possible solutions!

With a microservice deployment, the complexity is far greater. Let’s investigate…

Where: A microservice deployment, consisting of small isolated services, typically would be deployed to some form of abstraction or virtualization: cloud provider, virtual machine, container; in some cases, all of them at the same time! On top of this abstraction, we have our actual software (business logic). Now, throw in all the other components that could be at play: load balancer, DNS, API Gateway, web app, datastore, identity provider, … The list of where something could go wrong can be daunting and seemingly infinite. In addition, oftentimes the location of the failure is the symptom, but not the cause. For example, the service failed, but it is failing because the database is unavailable.

How: Ephemeral services, running in a distributed manner, across a vast network of virtual machines, interacting with other services, running in the same fashion- what could possibly go wrong? Well, to put it mildly, anything. During my time managing SaaS deployments, I’ve seen many different murder weapons at play. Even the seemingly benign can produce deadly consequences, which will destabilize our microservice environments. Here are a few examples:

- Murder in the dark: In the data center, the power drops, crashing a server and corrupting a database. Thankfully, we’d tested our disaster recovery and we could restore from the last snapshot.

- Silent Poisoning: A service has a broken dependency that is not handled properly. Instead of panicking, the service returns a success code, thus swallowing the error. All the health checks appear to be fine.

- The Perfect Swarm: “Andrea Gail” sinks, because the swarm managers, under high load, fail to communicate with each other. In a perfect storm, the leader is lost, and unable to elect a new one, the whole cluster can come crashing down.

- Avalanche: Failure to horizontally scale rapidly and on-demand. Our customer decided to launch a massive new ad campaign featuring free downloads from an A-list music star, but our scaling strategy failed to meet demand.

Who: The who becomes the most critical question to solve. Even if we can understand the where and how, if we do not solve the question of who, the murders will continue to happen. We must find the culprit(s) and eradicate them. Unfortunately, determining the culprit can be difficult, because it is a very nuanced idea. The who could be a failure by a human or automated process, a system failure, a software failure, or a multitude of other types of failures. Consider these examples:

- Faulty integration testing: “Mr. Jenkins, in the kitchen”. All of your tests pass, but the software that’s deployed does not behave as you expected.

- Lack of visibility into the enterprise: “Mr. Magoo, in the bedroom”. Your workloads are starting to get backed up, but you cannot see it happening until it’s too late.

- Dependent Systems Outages: “Mrs. O’Leary’s Cow, in the barn”- You lose a database, someone mangles the DNS entries or a lantern is kicked over in “us-west”.

Prevent the Murder

The best advice is to try to avoid/prevent the crime from happening in the first place. A couple of obvious starting points to prevent the crime:

- Don’t assume the cloud or your data center is reliable. Take advantage of high availability and think about disaster recovery upfront. Consider that AWS Oregon “decided” to lose network connectivity for 10 minutes. As a result, look how Netflix is investing in region evacuation.

- Be a precog on crime, what could happen in the future. I recall a time when, by mistake, we used some expired New Relic license keys when attempting to scale out some new services; of course, they crashed at startup. This could’ve been easily avoided with some forethought. “Hey, we need a key management system to help us identify expiry before it happens”.

Microservice Forensics

Since we cannot always prevent the murder from happening, the good news for us is that a Microgateway can help to play the role of Hercule Poirot or Sherlock Holmes, by providing us with the following in your microservice deployment:

- Centralised logging & metrics so that you can confirm statements of suspects. You should aggregate log, trace, and metric events from your microservices into a centralized location for analysis and visualization.

- Health checks for each service so you can confirm the suspect’s alibi. A health check is more than a ping to see that the process for the service is running. It should confirm that the service is running normally and that it is interacting with its dependent services as expected. An example health check would an API endpoint /health that performs various checks on system (mem, disk space), datastore (database connection pool), dependent microservices (response status from other services) etc. All of these combined give the actual status of the service.

- Tracing is the ability to trace the flow of requests across services (dapper / zipkin). Tracing provides the answers to “where that time was spent” during a distributed request or what was the suspect doing between the time of the murder. Tracing helps to measure things like latency, error rates, and throughput.

- Access Logging for a service so that you can see what entered and exited a service, access logging acts as the security camera at a doorway capturing the timing of all the comings and goings of potential suspects.

As ever, with any technology, there is no silver bullet, but the microgateway does provide you with a silver magnifying glass that can help you become the detective and solve the whodunit mystery of service outage!

For more microgateway info, read the other blogs in this series: